Detecting Propaganda Online

For most, the digital realm is a source of entertainment and a place to catch up with family and friends. But what happens if it is used to push terrorism propaganda?

To counter this concerning trend, we teamed up with industry experts to develop automated propaganda detection capabilities by using open-source information.

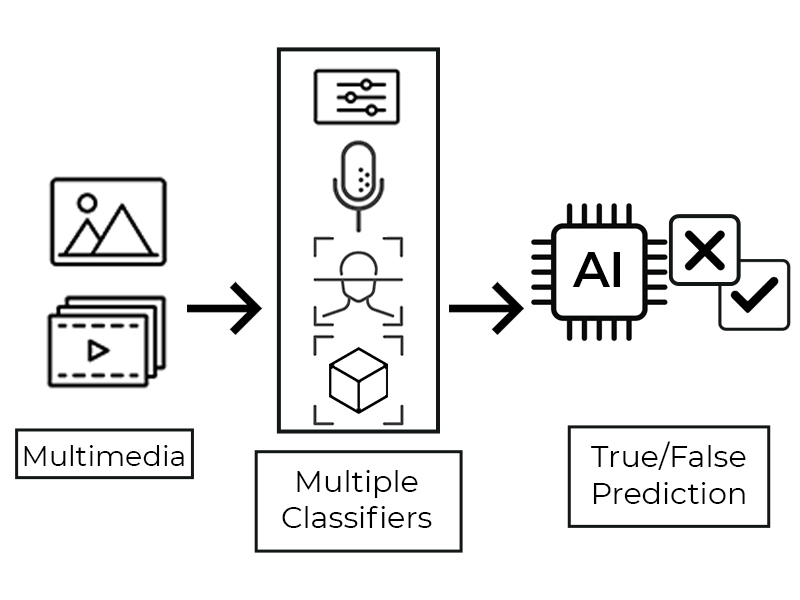

The system that was developed includes classification models that leverage machine learning to analyse publicly available multimedia files such as images and videos. In doing so, the system is able to determine the presence of possible terrorist propaganda, and provide an enhanced situation picture and unique insights into online perturbations for informed decision-making.

Firstly, distinctive features in the data are identified and extracted for further processing. Each of these features are then modelled into a classifier, and combined to form an ensemble classifier that provides the final prediction score – the higher the score, the greater the likelihood that the data is propaganda. A threshold was also added to adjust the classifier’s level of conservativeness or permissiveness.

To achieve this, it was important to first work out the discerning features that distinguished terrorist propaganda. It was quite a tricky matter, as mainstream media could also include snippets of such content as part of their reporting.

Senior Engineer (Information) Michael Lee said: “Working together with industry experts, we took special care in adding negative ‘edge cases’ data for training, which are essentially data that could look and sound like propaganda material in terms of style and composition but are in fact just harmless mainstream content. Through this, we were able to reduce the system’s false positive rate significantly."

Beyond just building the model and ensuring its accuracy, the team also had to consider many other aspects when tailoring the capability for operational needs. Principal Engineer (Information) Ian Lee explained: “The system had to be fast, reliable and all-encompassing. This meant that its inference speed had to be quick enough to provide a high throughput, and its service had to be reliable enough to handle heavy load for extended periods of time. In addition, it also had to be able to accept a variety of multimedia file formats, from .gif to .mov.”

Continuous learning and industry collaboration were key for the project’s success. To pick up best practices, the team conducted a week of collaborative coding sprints with industry partners to learn more about development processes in artificial intelligence (AI) to better develop and refine the system. The team also attended technical sharing sessions where data scientists talked about workflows and considerations in bringing AI models into production.

Michael added: “It was important for us to be learning constantly, so we could incorporate the best of commercial practices into our workflow. The experiences shared by industry experts provided valuable insights into how best to build lean technological frameworks into our work. This allowed us to glean new ideas and make an impact not just for this project, but in our other projects as well.”